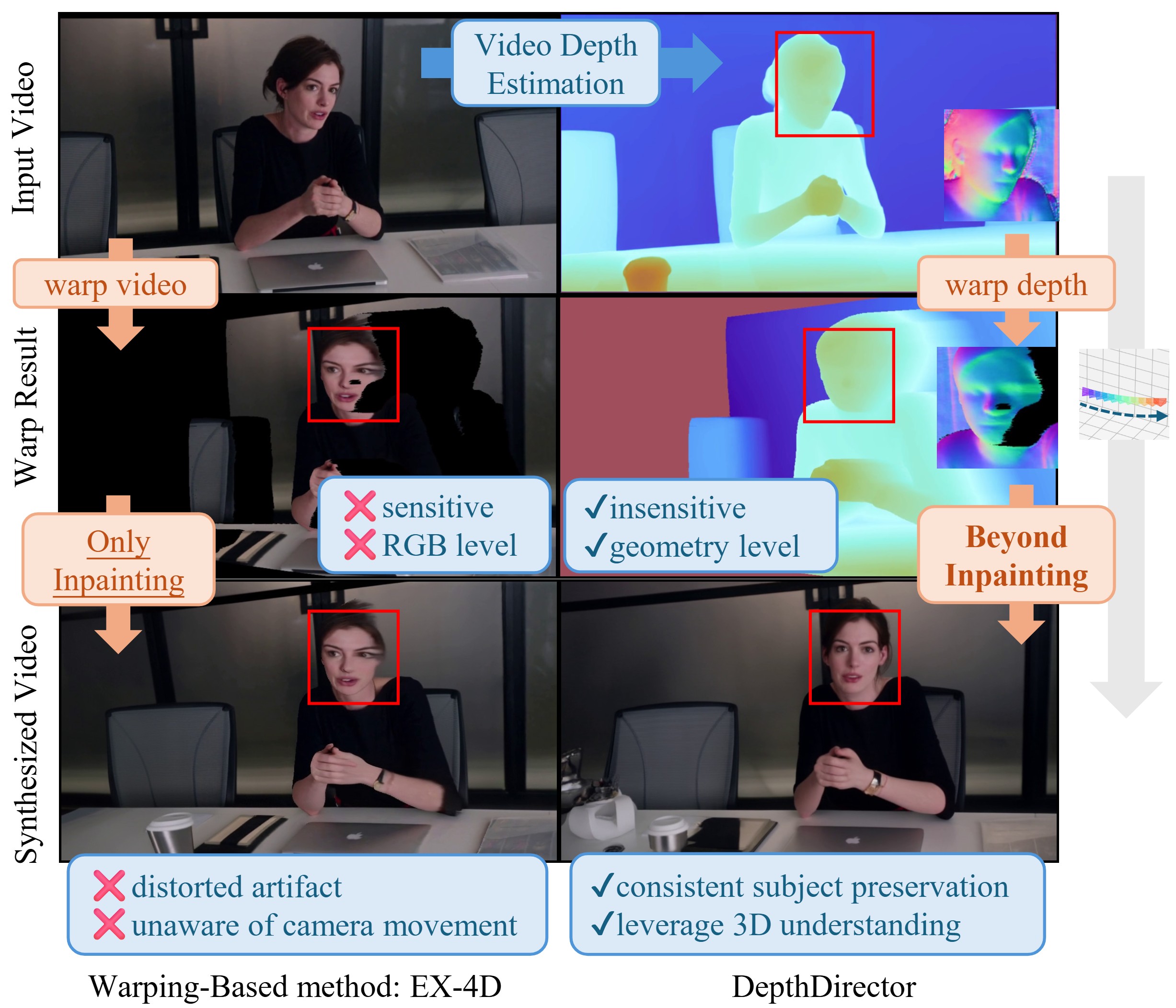

TL;DR: We propose DepthDirector, a video re-rendering framework designed for precise camera control without sacrificing content consistency. While mainstream approaches to precise camera control often fall into the "Inpainting Trap" leading to degraded generation quality, our key insight lies in empowering VDMs to comprehend camera motion rather than relying on simple inpainting. By leveraging warped depth as camera-control guidance, we unleash the latent 3D priors of VDMs. We design a View-Content Dual-Stream mechanism that injects both the original video and a target-trajectory depth map into a pretrained diffusion model. Furthermore, our framework can be trained using LoRA and a synthetic dataset rendered via Unreal Engine of only 8K videos, greatly saving computational costs.

Here we display side-by-side videos comparing our method to top-performing warping-based methods.

Select a scene and a baseline method below:

Here we display side-by-side videos comparing our method to top-performing baseline ReCamMaster across a number of scenes, evaluating performance in generation quality and camera control precision.